The threat landscape has expanded to include more sophisticated attacks on the growing number of connected IoT edge devices that are used across various industries, such as manufacturing, energy, and utilities. To improve the business value proposition and bring digital transformation, enterprises have increasingly turned to these connected devices, creating new vulnerabilities for cyber attacks. Moreover, the bad actors advance their techniques by leveraging AI.

In order to defend against adversarial AI attacks, organizations need to adopt edge security solutions that are specifically designed for edge networks, utilizing AI-based security measures, such as non-deterministic L2/L3 firewalls, intrusion detection and prevention system, and self-adaptive approaches that incorporate pre-trained machine learning models for detecting changes in traffic patterns. However, the focus of this article will be on AI-based IDS and IPS in particular.

A multi-layered edge security solution that integrates artificial intelligence-based intrusion detection and prevention systems helps detect and prevent distributed denial-of-service (DDoS), botnets, scans, malware, ransomware, brute force, and man-in-the-middle attacks, a common and significant threat to IoT devices. By harnessing the capabilities of artificial intelligence, AI EdgeLabs edge security solutions can accurately detect anomalies and potential attacks, enabling organizations to protect their distributed edge infrastructure from cyber-attacks proactively.

An intrusion detection system is a preventative measure that alerts the operator (cybersecurity team) in case of intrusion without taking any immediate action. In contrast, an intrusion prevention system is a more aggressive approach that takes active measures to prevent intrusion by blocking malicious traffic.

In an intrusion detection system, the system monitors network traffic and identifies a potential security breach. There is one significant challenge involving these types of security systems–the threat of Adversarial AI attacks. We have previously discussed the rule-based intrusion detection systems (IDS) and intrusion protection systems (IPS) and how they are not enough to secure your IoT edge infrastructure.

Traditional IDS systems are able to detect regular system intrusion but are vulnerable to adversarial AI attacks where the hacker injects deceptive input into the AI training data.

The impact of these attacks can appear as false positives and false negatives, leading to the failure of the IDS to detect and prevent cyber threats accurately. A false positive occurs when harmless traffic gets incorrectly flagged as malicious and is blocked from entering the system. Conversely, a false negative is when malicious traffic is considered benign and allowed to enter the system.

Cyber attackers take advantage of these security vulnerabilities to penetrate the edge network and execute cyber-attacks. The adversarial attacks exploit false positives and negatives to deceive the system into allowing malicious traffic to enter and infiltrate the network. Adversarial AI techniques can modify the input data in a way that is not noticeable to humans. Still, it causes the AI-based IDS to classify harmless data as malicious incorrectly. With the integration of machine learning models, false positives can be minimized, providing a more comprehensive cybersecurity approach.

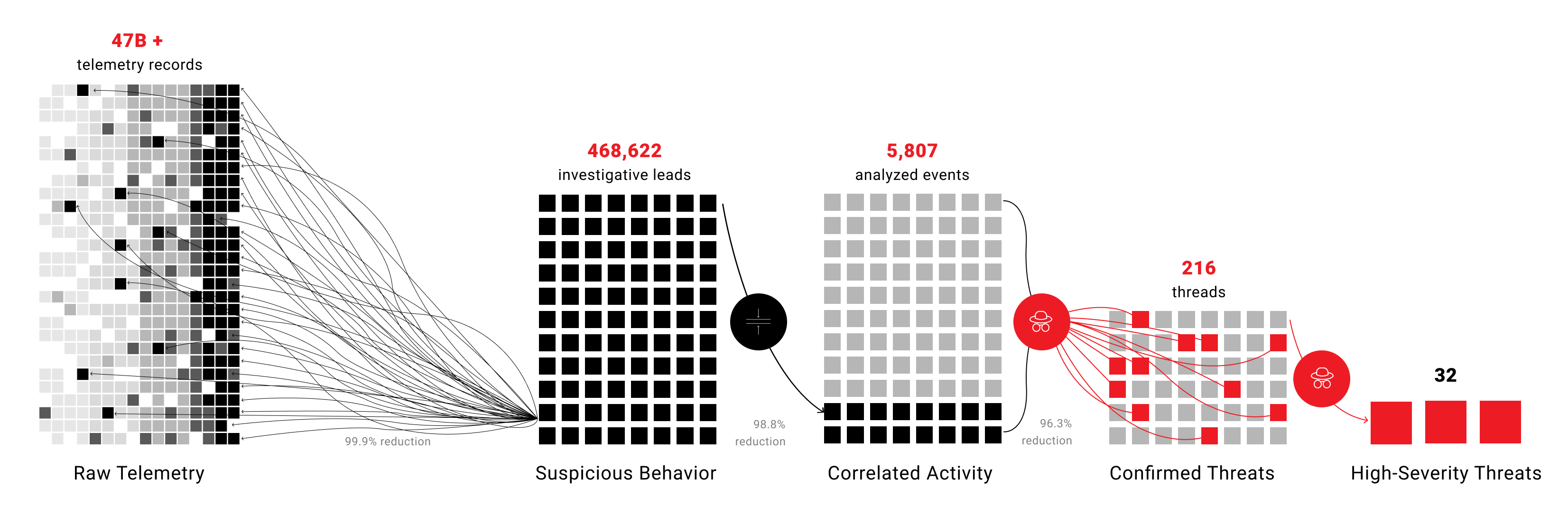

AI EdgeLabs has a distinct cybersecurity approach compared to traditional antivirus solutions that are based on a database of known malware signatures. Unlike these solutions, our edge security solution detects new malware based on its behavior patterns rather than relying on a pre-existing database. Our solution is equipped with machine learning models that are designed to protect from adversary attacks by design and exclude the possibility of receiving invalid data. Additionally, to reduce false positives, we have implemented a layered structure:

-

At the first layer, an ensemble of models with different architectures is used to provide more robust predictions.

-

The second layer is focused on alert filtering that monitors edge telemetry and system stability rather than network traffic.

-

The third layer is responsible for defining the severity of the alert, making it possible to show only critical alerts that pose a threat to the system's stability.

AI EdgeLabs’ three-layer security system enables zero levels of false positives mitigating adversarial attacks on AI training data.

State-of-the-art machine learning models for IDS

The AI EdgeLabs platform offers a range of pre-trained artificial intelligence models that leverage an existing knowledge base of threat patterns and attack signatures. The platform’s agent is completely autonomous and runs all the machine learning models directly on the agent, while it has the ability to block the IP address and other sources of threats.

Our machine learning models are trained on a large number of different datasets collected from other researchers who have collected attack data from different infrastructures. Our lab conducts extensive testing on various testbeds to replicate cyber attacks and gather data. This data is then used to train our machine-learning models. Our ML models come in different types, including general models trained on data from our laboratory and datasets of other researchers, as well as personalized models developed for specific clients.

To fine-tune our personalized models, we have a "learning mode" in our agent, which allows us to receive data from the client in real time. This approach enables us to continuously improve our personalized models and control and validate their effectiveness. We primarily use the "learning mode" during the integration process, but we occasionally apply it for control purposes.

It is worth noting that our general models have demonstrated excellent performance in detecting attacks. As a result, if clients are unable or unwilling to share data for personalized model training, they can still benefit from our highly effective general models.

Our approach to model development and validation ensures that our clients receive the most advanced and effective protection against cyber attacks. We continually enhance our models to keep up with the ever-evolving cyber threat landscape, and our personalized models enable us to provide tailored protection to meet specific client needs.

We prioritize the privacy and security of our clients sensitive data. To comply with certain regulations and guidelines, we avoid interfering with the client's network data and refrain from analyzing the content of packets. Instead, we focus on analyzing packet headers to identify potential threats and anomalies. By analyzing packet headers, we can obtain valuable information about the source, destination, and traffic type, enabling us to identify potentially malicious behavior that may indicate an attack.

Get more with AI EdgeLabs security solution

Our edge security solution offers a low noise-to-signal ratio for lateral movement attacks, which is a crucial aspect of effective threat detection. This feature enables the security system to accurately distinguish between normal and malicious activity, thereby minimizing false alerts. Security systems with a high noise-to-signal ratio often generate too many false alerts, leading to failure to identify the actual threat.

As technology advances, cyberattacks are also becoming more sophisticated and diverse. However, with the latest edge security approaches that include AI-based intrusion detection systems and intrusion prevention systems, organizations can protect their infrastructure against a wide range of attacks.

Get in touch with our team to understand how AI EdgeLabs' security approach can help your distributed edge infrastructure to stay protected against cyber threats.